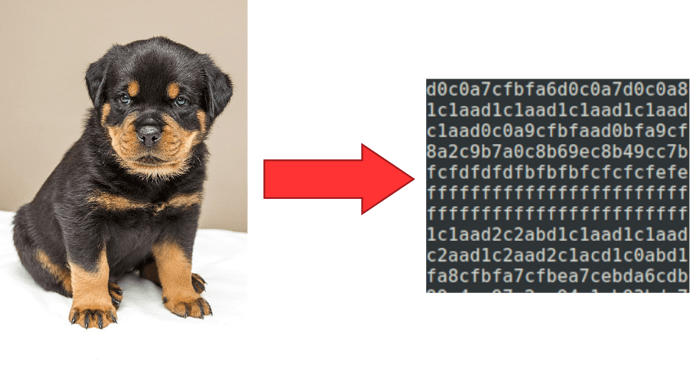

I think that it will be practical to embed images as text input for language models using hexadecimal, base64, or some other encoding. This could work well for Argos Translate or LibreTranslate where the translation pipeline is already set up for text. However, I don’t think this is currently possible because the text strings are too long to pass into the Transformer model.

from PIL import Image

image = Image.open("my_image.jpg")

IMAGE_SIZE = 100

image = image.resize(

(int(IMAGE_SIZE * (image.size[0] / image.size[1])), IMAGE_SIZE)

)

def pixel_to_str(pixel):

to_return = str()

for channel in pixel:

assert channel >= 0

assert channel < 256

to_return += channel.to_bytes(1, byteorder="big").hex()

return to_return

text_embedding = str()

for x in range(0, image.size[0]):

for y in range(0, image.size[1]):

text_embedding += pixel_to_str(image.getpixel((x, y)))

print(text_embedding)

Yeah text size would get really large with base64 encoding.

1 Like

In the example above I’m embedding each pixel in 6 hex digits, for example, “ff00a4”. If it’s encoded in base64 it would be a shorter text sequence but more difficult to interpret. Either way it’s a very long text sequence even for a small image.

100 px * 100 px * 6 chars/px = 60000 chars

Is the goal to permit text to contain images and for those images to be preserved during translation? Could a hashing method with tags similar to the XML/HTML tags work?

Images → crc32 (or md5) → replace images with crc32 tags (and keep dictionary) → translation with tags → replace crc32 tags with original images.

1 Like

Embedding the images as text is so that the same neural network that processes the text for translation could also read or write image data.

This hashing mechanism could work for passing images through the translation system without accessing their content. We already can pass images in html and other document formats through LibreTranslate by parsing the XML and only translating text data leaving the images intact.

1 Like