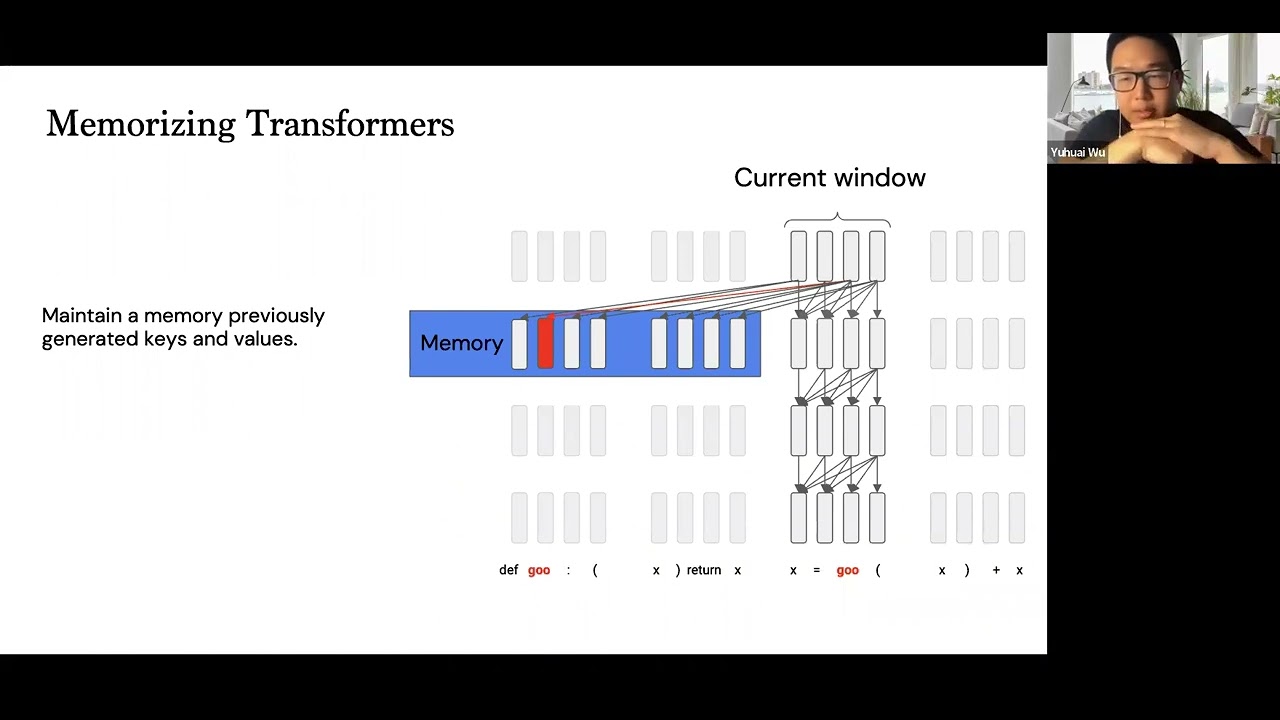

My understanding of this presentation is that they’re breaking text into chunks to fit each chunk into a Transformer model, they save the key and values from previous chunks and let the Transformer attend to these saved memory values.

Argos Translate currently uses Stanza to split long input texts into sentence chunks and translate each sentence independently.